- Blog

- Menace to society full movie free hd putlocker

- Upgrade macbook pro hard drive 2014 m-2

- Best cyber monday deals 2021 buzzfeed

- Young jeezy tm103 zip

- Conexant high definition audio driver for vista

- Anyconnect vpn certificate validation failure

- Tomb raider games for mac os x

- Firestarter apk wont install on firestick

- Where do i find paintbrush for mac

- Proxima nova light font

- Linux os iso file download

- West chester university covid vaccine clinic

- Geforce mx 4000 driver

- How to use dropbox on mac

- C media usb audio device windows 7

- Wow legion ptr talent calculator

- Campaign cartographer 3 free download full windows 10

- Reconcile bloody download

- Setup ftp for wordpress azure vm

- Best games of 2017 free

- How to manually download mods factorio

- Sql software download free

- Remote for mac book pro

- Toon boom harmony mac

- Is there a free demo of forza 3 horizon for xbox 1

A crucial configuration here, though, is to set the table’s waitOnExternal to an object (can be empty) which will indicate to ADF that the blob is created outside ADF. The blob’s contents aren’t actually important, I just have the digit ‘1’ and as I expect my process to run daily I set the table’s availability to 1 Day. To satisfy ADF, I’ve created a table that points at a blob I’ve created in my storage account. In this scenario that input table is largely irrelevant as the real input data is what I’ll obtain from the FTP server The input tableĪ pipeline in ADF requires at least one input table. net activities so I sat down to create one but before I go into the details of the (very simple!) implementation, it would be useful to explain the context, and for simplicity I’ll describe a simple set-up that simply uses the activity to download a file on an FTP server to blob storage.

Reading and writing customer data is easily done using an on-premises data gateway, however at this point in time ADF does not have built in capability to obtain data from an FTP server location.

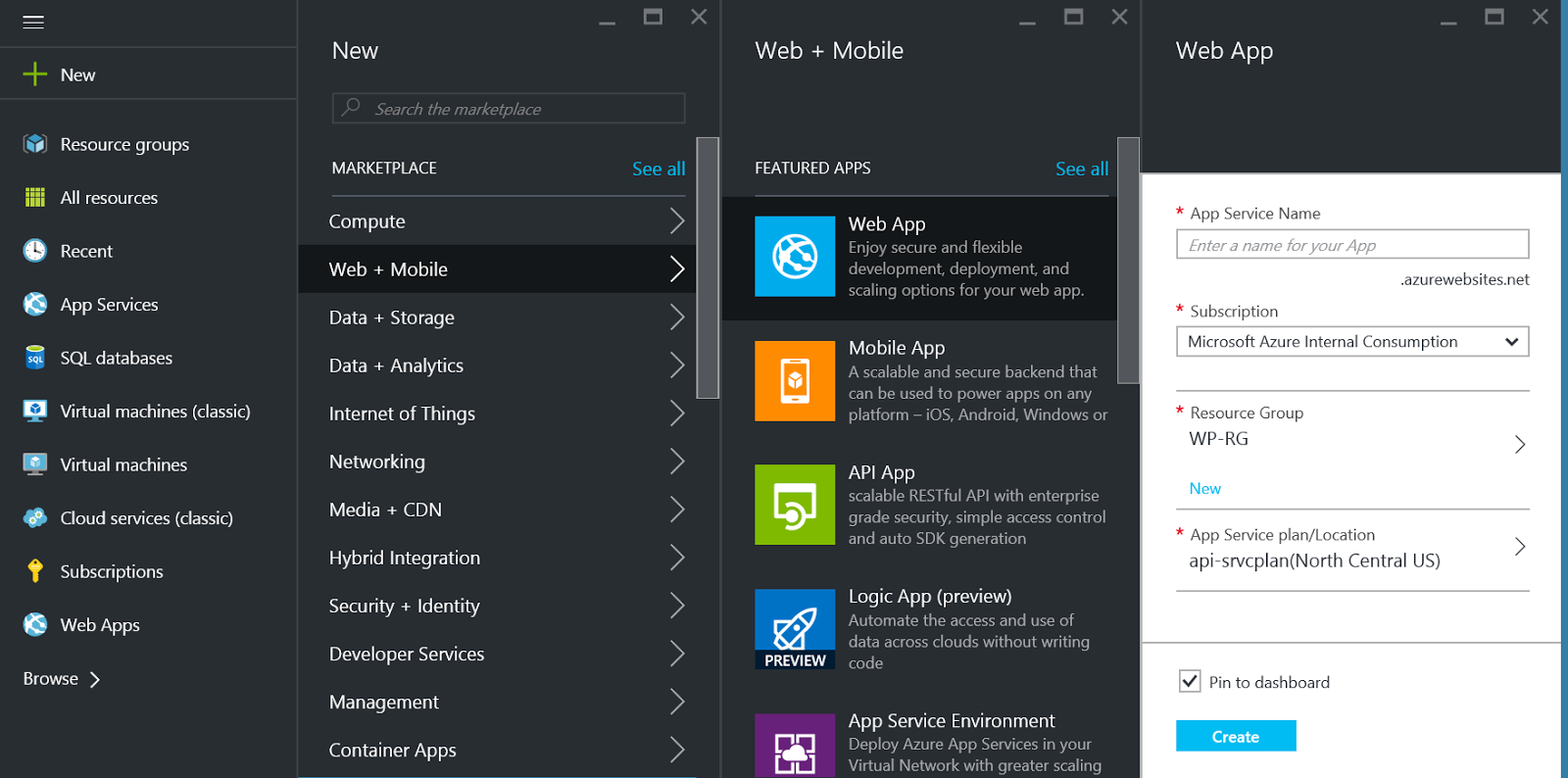

#Setup ftp for wordpress azure vm how to

The approach I wanted to look into is how to use Azure Data Factory (ADF) to obtain both the invoices and the customer data, store a snapshot of both data sets in blob storage, use HDInsight to perform the reconciliation and push the outcome (invoices to pay and list of line item exceptions) back to a local database. The process requires obtaining the suppliers invoices (typically from an FTP server) and matching it against the customer’s own data, flagging any exceptions. A customer I’m working with is looking to put in place a process to reconcile large invoices (=several million line items) from various suppliers.

- Blog

- Menace to society full movie free hd putlocker

- Upgrade macbook pro hard drive 2014 m-2

- Best cyber monday deals 2021 buzzfeed

- Young jeezy tm103 zip

- Conexant high definition audio driver for vista

- Anyconnect vpn certificate validation failure

- Tomb raider games for mac os x

- Firestarter apk wont install on firestick

- Where do i find paintbrush for mac

- Proxima nova light font

- Linux os iso file download

- West chester university covid vaccine clinic

- Geforce mx 4000 driver

- How to use dropbox on mac

- C media usb audio device windows 7

- Wow legion ptr talent calculator

- Campaign cartographer 3 free download full windows 10

- Reconcile bloody download

- Setup ftp for wordpress azure vm

- Best games of 2017 free

- How to manually download mods factorio

- Sql software download free

- Remote for mac book pro

- Toon boom harmony mac

- Is there a free demo of forza 3 horizon for xbox 1